Crawlkit vs Skippership

Side-by-side comparison to help you choose the right product.

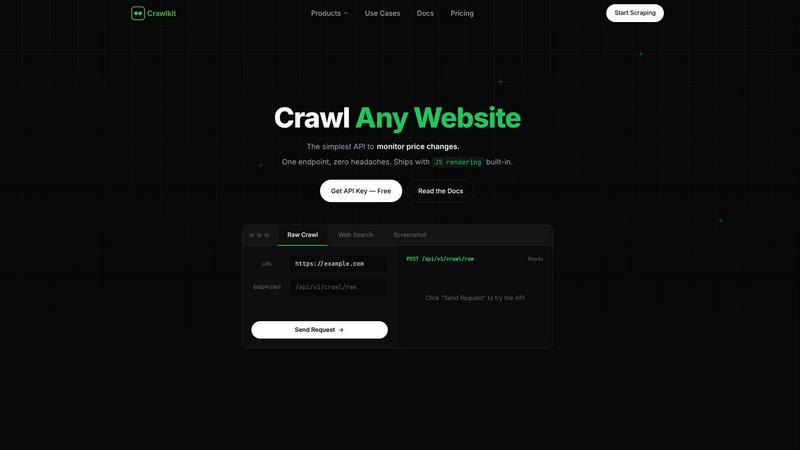

Crawlkit

CrawlKit is an API-first platform that turns any website into structured data with a single request.

Last updated: February 28, 2026

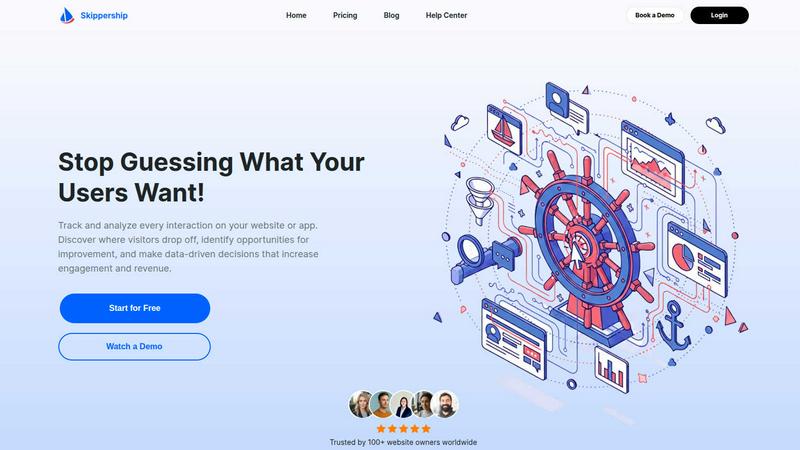

Skippership

Skippership analyzes user behavior to identify friction points and enhance website engagement and conversions.

Last updated: March 1, 2026

Visual Comparison

Crawlkit

Skippership

Feature Comparison

Crawlkit

Unified Multi-Platform API

Crawlkit provides a single, cohesive API endpoint to extract structured data from a vast array of disparate web sources. Instead of building and maintaining separate scrapers for LinkedIn, Instagram, search engines, and app stores, developers can interact with one consistent interface. This unification drastically reduces development time, simplifies code maintenance, and ensures a standardized data output format across all platforms, making data integration and processing significantly more efficient.

Built-In Infrastructure Management

The platform completely handles the underlying infrastructure required for robust web scraping. This includes automated management of rotating residential and datacenter proxies to avoid IP bans, dynamic rendering of JavaScript-heavy pages using headless browser technology, and intelligent logic to bypass anti-bot systems like CAPTCHAs and fingerprinting. By internalizing these complexities, Crawlkit guarantees higher success rates and reliable data delivery without requiring any engineering effort from the user.

Transparent, Credit-Based Pricing

Crawlkit operates on a clear, pay-as-you-go credit system where each API call consumes a predetermined number of credits. This model offers full cost predictability with no monthly subscriptions, hidden fees, or surprise overage charges. Notably, credits are refunded if a request fails, and they never expire, providing exceptional flexibility. The pricing is transparently displayed per endpoint, and volume discounts are available for high-volume users, aligning cost directly with usage.

Guaranteed Data Completeness and Structure

Unlike basic HTTP clients that may return incomplete HTML, Crawlkit is engineered to ensure data completeness. It waits for full page loads, including all dynamic content rendered by JavaScript, and validates responses before delivery. The platform then parses the raw HTML into clean, structured JSON data, extracting the relevant fields (like follower counts, job titles, or review ratings) so users receive analysis-ready information instead of unstructured markup.

Skippership

Session Replays

Session Replays provide an unfiltered, video-like recording of real user interactions on your website or application. This feature allows you to observe exactly how visitors navigate, where they hesitate, click, or encounter errors. It is instrumental in uncovering usability issues, identifying points of confusion in forms or checkout processes, and understanding the context behind quantitative data like bounce rates. By watching these replays, teams can diagnose specific friction points and conversion blockers that aggregate statistics often miss, leading to more precise and effective optimizations.

Interactive Heatmaps

Skippership's Interactive Heatmaps offer a visual aggregation of user engagement data, displaying where visitors most frequently click, move their mouse, and how far they scroll on any given page. Click heatmaps reveal the elements that attract attention, while scroll maps show content visibility and engagement depth. This feature is crucial for optimizing page layout, call-to-action placement, and content hierarchy. By identifying which sections are ignored, businesses can strategically reposition key information and buttons to guide user behavior, reduce cognitive load, and ultimately boost conversion rates.

Goal and Event Tracking

This feature enables businesses to define, track, and analyze key performance indicators (KPIs) and specific user actions, such as form submissions, product purchases, button clicks, or sign-ups. Goal Tracking transforms vague aspirations into measurable metrics, allowing for real-time performance monitoring. It helps uncover patterns in user behavior that lead to conversions, facilitating A/B testing and providing clear evidence of what design or content changes directly impact business outcomes. This data-driven approach ensures that optimization efforts are focused on actions that genuinely drive results.

AI-Powered Insights Analytics

Going beyond raw data presentation, Skippership's AI Analytics automatically processes vast amounts of behavioral data to surface meaningful patterns, trends, and actionable insights. The AI can highlight common drop-off points, detect recurring usability issues like "rage clicks," and suggest potential areas for improvement. This feature accelerates the analysis process, helping teams move faster from data collection to decision-making. It empowers users to understand the "why" behind user behavior, making it easier to prioritize fixes and enhancements that will have the most significant impact on engagement and revenue.

Use Cases

Crawlkit

CRM and Lead Enrichment

Sales and marketing teams can automate the enrichment of contact records in their Customer Relationship Management (CRM) systems. By programmatically feeding LinkedIn profile URLs into Crawlkit's API, they can pull structured data such as current job titles, company affiliations, professional summaries, and skills. This automates the manual research process, ensures data accuracy, and provides sales representatives with richer context for personalized outreach and lead scoring.

Competitive Intelligence and Market Research

Businesses can systematically monitor competitors by extracting public data from various sources. This includes tracking a competitor's Instagram growth metrics (follower count, engagement rates), analyzing reviews and ratings of their apps on the Play Store and App Store, or scraping their company details and job postings from LinkedIn. This aggregated, structured data fuels competitive analysis, informs strategic decisions, and identifies market trends.

Social Media Performance Tracking

Marketing agencies and brand managers can build automated dashboards to track the performance of social media campaigns. By regularly calling Crawlkit's Instagram API endpoints, they can gather historical data on profile growth, post engagement, and content performance for their own accounts or benchmark against industry influencers. This data is crucial for reporting, optimizing content strategy, and demonstrating ROI to clients.

App Store Optimization (ASO) Analysis

Mobile app developers and publishers can leverage Crawlkit to gather critical data for App Store Optimization. The API can extract detailed app metadata, user reviews, and ratings from both the Google Play Store and Apple App Store. Analyzing this structured data helps developers understand user sentiment, identify common complaints or requested features, monitor keyword performance, and benchmark against competing apps to improve their own app's visibility and conversion rates.

Skippership

E-commerce Conversion Rate Optimization

E-commerce managers utilize Skippership to pinpoint exactly where potential customers abandon their shopping carts. By analyzing session replays of failed checkouts and heatmaps on product pages, they can identify confusing form fields, unexpected shipping costs, or technical errors. Tracking goals for "Purchase Completed" allows them to measure the direct impact of changes, such as simplifying the checkout process or clarifying call-to-action buttons, leading to a measurable increase in sales and average order value.

SaaS Product Onboarding Improvement

Product teams at Software-as-a-Service companies employ Skippership to enhance user onboarding flows. Session replays reveal where new users get stuck or confused when first using the application. Heatmaps on tutorial pages show which help content is engaged with or skipped. By tracking goals for "Key Feature Activated" or "Subscription Upgraded," teams can iteratively refine the onboarding experience, reduce time-to-value, and improve user retention rates by ensuring customers successfully discover core product benefits.

Content and Blog Engagement Analysis

Website owners and content marketers use Skippership to understand how visitors interact with blog posts and articles. Scroll heatmaps indicate how far readers progress and which sections hold attention, while click heatmaps show which internal links or promotional banners are effective. This data informs content structure, ideal article length, and placement of subscription forms or related content suggestions, ultimately increasing page engagement, average session duration, and lead generation from content marketing efforts.

UX and Usability Testing

User Experience designers and researchers leverage Skippership as a continuous, remote usability testing tool. Instead of organizing costly lab studies, they can review anonymous session replays to observe real users interacting with new designs or features in their natural environment. Identifying rage clicks, excessive scrolling, or navigation loops provides direct feedback on design intuitiveness. This allows for rapid, evidence-based iterations of wireframes and prototypes before full development, saving time and resources while improving the final product usability.

Overview

About Crawlkit

Crawlkit is a sophisticated, developer-centric web data extraction platform engineered to transform the complex, often frustrating process of web scraping into a simple, reliable, and scalable API service. Its core value proposition is to "Turn the Web into an API," providing developers and data teams with structured data from virtually any website or online platform through a single, unified interface. The platform is meticulously designed to abstract away the immense technical overhead traditionally associated with data collection, including the management of rotating proxy networks, execution of headless browsers, circumvention of sophisticated anti-bot protections, and adherence to platform-specific rate limits. This allows users to shift their focus entirely from the mechanics of data gathering to the strategic analysis and utilization of the data itself. Catering to a broad spectrum of users, from agile startups to large-scale enterprises, Crawlkit supports extraction from diverse sources like LinkedIn for professional networking data, Instagram for social media metrics, Google and DuckDuckGo for search results, and major app stores for application details and reviews. With a transparent, credit-based pricing model, no monthly commitments, and a promise that credits never expire, Crawlkit positions itself as a flexible, cost-effective, and powerful foundation for building data-driven applications and workflows.

About Skippership

Skippership is a comprehensive, AI-powered analytics and user experience optimization platform designed to transform how businesses understand and interact with their website and application visitors. It moves beyond traditional analytics by providing a unified suite of tools that visualize actual user behavior, enabling organizations to move from speculation to data-driven action. The platform is engineered for digital marketers, product managers, UX designers, and website owners who require deep, actionable insights into user journeys without the complexity of fragmented tools. Its core value proposition lies in delivering "Maximum Clarity" through an all-in-one, intuitive interface that combines qualitative and quantitative data. By leveraging session recordings, interactive heatmaps, conversion goal tracking, and proprietary AI analytics, Skippership identifies precise friction points, conversion blockers, and untapped opportunities for improvement. This empowers teams to make confident decisions that directly enhance user engagement, strengthen customer retention, and increase revenue. With a strong emphasis on privacy, security, and ease of use—featuring a no-code setup and minimal performance impact—Skippership is built to scale with businesses of any size, providing the visibility needed to optimize online presence effectively and efficiently.

Frequently Asked Questions

Crawlkit FAQ

What happens if an API request fails?

Crawlkit operates on a refund policy for failed requests. If an API call does not successfully return the requested structured data due to issues on Crawlkit's side (such as a parsing error or infrastructure problem), the credits spent on that request are automatically refunded to your account. This policy ensures you only pay for successful, usable data delivery.

Do I need to manage proxies or browsers?

No, absolutely not. One of Crawlkit's primary value propositions is the complete abstraction of infrastructure management. The platform automatically handles all aspects of proxy rotation, browser emulation, and session management to navigate anti-bot measures. As a user, you simply send an API request with your target URL and receive structured data, with no need to configure or maintain any underlying scraping infrastructure.

How does the credit system work?

Crawlkit uses a credit-based pricing model. You purchase a bundle of credits upfront. Different API endpoints cost a different, transparent number of credits per call (e.g., a LinkedIn profile may cost 2 credits). Credits are deducted from your balance only for successful requests. They have no expiration date, and you can purchase more at any time, with volume discounts applied for larger bundles. There are no recurring monthly fees.

Can I request a new data source or API endpoint?

Yes, Crawlkit actively encourages user feedback for new integrations. The platform states, "Need an API we don't have yet? Talk to us, we'll build it." Users can contact the Crawlkit team to request support for additional websites, social platforms, or specific data extraction needs. This collaborative approach ensures the platform evolves to meet the real-world requirements of its developer community.

Skippership FAQ

How does Skippership ensure user privacy and data security?

Skippership is built with a privacy-first architecture. It does not process personal or sensitive user data and is designed to comply with major regulations like GDPR and CCPA. All data is encrypted in transit using SSL/TLS and securely stored on reliable cloud infrastructure. The platform allows for easy data management and provides tools to respect visitor consent preferences, ensuring that businesses can gain insights while maintaining the highest standards of data protection and ethical data collection practices.

What is the performance impact of installing Skippership on my website?

Skippership is engineered for minimal performance impact. The tracking script is lightweight and asynchronous, meaning it loads without blocking other elements of your website. The platform employs efficient data collection and processing methods to ensure your site's loading speed and overall user experience are not compromised. This allows you to gather comprehensive behavioral analytics without sacrificing the core performance metrics that are also crucial for SEO and user satisfaction.

Can Skippership integrate with other tools in my tech stack?

Yes, Skippership offers robust integration capabilities with over 50 popular platforms to streamline workflows and unify data. This includes direct integrations with content management systems like WordPress, Joomla, and Drupal; e-commerce platforms like Shopify, WooCommerce, and Magento; and marketing tools like Google Analytics and HubSpot. These integrations allow you to correlate behavioral insights with other business data, creating a more holistic view of your customer journey and operational effectiveness.

How quickly can I start getting insights after setting up Skippership?

Setup is designed to be fast and code-free for most standard websites. Typically, you can install the provided tracking snippet and begin collecting data within minutes. While some data, like session replays, is available almost immediately, building comprehensive heatmaps and AI-driven insights may require a short period of data collection (usually a few days to a week, depending on your site traffic) to ensure statistical significance and provide you with reliable, actionable findings.

Alternatives

Crawlkit Alternatives

Crawlkit is a prominent API-first web scraping platform within the analytics and data category, designed to streamline the extraction of web data for developers and data teams. It abstracts the complexities of managing proxies, headless browsers, and anti-bot measures, allowing users to focus on data utilization rather than infrastructure. Users often explore alternatives to Crawlkit for various reasons, including budget constraints, specific feature requirements not covered by the platform, or the need for a different deployment model such as on-premise solutions. The search can also be driven by project scale, desired integration capabilities, or particular data source specializations. When evaluating an alternative, key considerations include the platform's success rate in data extraction, its ability to handle JavaScript-rendered content, the sophistication of its anti-blocking technology, and the clarity of its pricing structure. Scalability, data delivery speed, and the quality of developer documentation and support are also critical factors that determine long-term viability for data-intensive projects.

Skippership Alternatives

Skippership is a comprehensive AI-powered analytics and user behavior platform. It falls within the digital analytics and user experience optimization category, providing tools like session replays and heatmaps to help businesses understand and improve website engagement. Users may seek alternatives to Skippership for various reasons. Common considerations include budget constraints and specific pricing models, the need for different feature sets or integrations, or requirements for analyzing particular platforms like mobile apps or e-commerce systems. The scale of the website and the desired depth of technical support also influence the search for a suitable tool. When evaluating an alternative, key factors to assess include the core feature alignment with your goals, such as session recording fidelity and heatmap types. Data privacy compliance, ease of implementation, the quality of actionable insights generated, and the overall cost relative to the value provided are all critical dimensions for a thorough comparison.