LLMWise vs PoYo API

Side-by-side comparison to help you choose the right product.

LLMWise

LLMWise offers a single API to seamlessly access and compare top AI models like GPT, Claude, and Gemini with.

Last updated: February 26, 2026

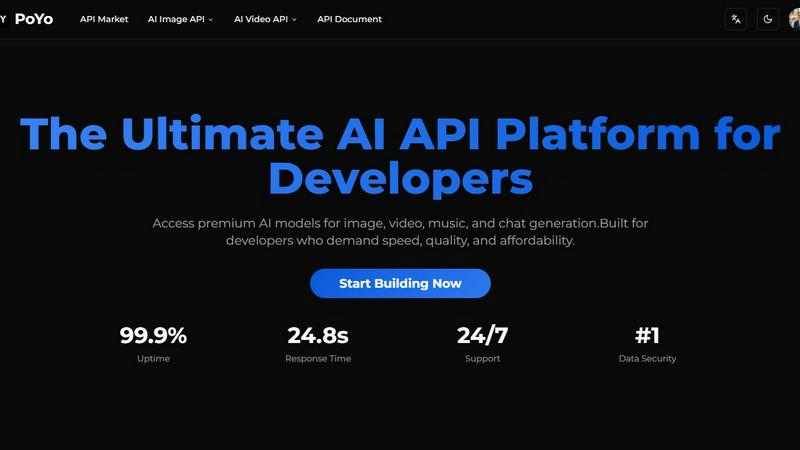

PoYo API

PoYo API provides unified developer access to premium AI models for image, video, music, and chat generation.

Last updated: February 28, 2026

Visual Comparison

LLMWise

PoYo API

Feature Comparison

LLMWise

Smart Routing

Smart routing is a key feature of LLMWise that automatically selects the optimal model for each prompt. When a user submits a request, LLMWise intelligently directs it to the most appropriate AI model based on the task at hand, whether it is coding, creative writing, or translation. This ensures that users receive the best possible results without the need to manually choose which model to use for each individual task.

Compare & Blend

The Compare & Blend feature allows users to run prompts across different models side-by-side. This capability not only facilitates direct comparison of responses but also enables users to blend the strongest aspects of each output into a single, cohesive answer. This synthesis of information leads to richer, more nuanced results that can significantly enhance the quality of the final output.

Always Resilient

LLMWise includes an always-resilient architecture featuring a circuit-breaker failover mechanism. This ensures that if one provider goes down, the system can reroute requests to backup models seamlessly, preventing any disruption in service. As a result, applications utilizing LLMWise can maintain reliability and availability, even in the face of unexpected provider outages.

Test & Optimize

With built-in benchmarking suites and batch tests, LLMWise allows users to optimize their API usage based on speed, cost, or reliability. Developers can implement automated regression checks to ensure consistent performance over time. This feature supports continuous improvement and helps teams to fine-tune their AI integrations for maximum efficiency and effectiveness.

PoYo API

Unified Multi-Model API Access

PoYo API provides a single, consolidated endpoint for accessing a curated library of over 500 leading AI models spanning image, video, music, and chat generation. This eliminates the need for developers to manage multiple API keys, SDKs, and billing accounts across different providers. The platform's standardized interface simplifies integration, reduces code complexity, and accelerates development cycles, allowing teams to focus on building application logic rather than managing vendor-specific integrations.

Flexible Credit-Based Pricing Model

The platform operates on a transparent, pay-as-you-go credit system, fundamentally diverging from restrictive subscription plans. Users purchase credits that never expire and are consumed based on the specific model and task complexity used. This model offers unparalleled financial flexibility, enabling developers to scale usage up or down based on real demand without being locked into fixed monthly fees or over-provisioning for unused capacity, ensuring optimal cost-efficiency.

Enterprise-Grade Security & Reliability

PoYo API is built with a zero-knowledge architecture, ensuring API keys and user credentials are stored with industry-standard encryption. The platform guarantees 99.9% operational uptime through robust monitoring systems and provides full audit logging for compliance. This commitment to security and reliability makes it suitable for handling sensitive data and mission-critical production workloads where data integrity and service availability are paramount.

Comprehensive Developer Tools & Support

Developers benefit from a suite of tools designed to streamline the development process, including a free playground for testing all models without a credit card, simple two-endpoint async API design, and webhook support for real-time task callbacks. This is complemented by 24/7 dedicated technical support and a dashboard with manual retry capabilities for failed tasks, providing developers with full control and assistance throughout the integration and deployment lifecycle.

Use Cases

LLMWise

Software Development

In the realm of software development, LLMWise can be employed to streamline coding tasks. Developers can use the platform to send code-related prompts to the most capable models, such as GPT, ensuring that they receive accurate suggestions and code snippets that enhance productivity.

Creative Writing

Writers and content creators can leverage LLMWise for generating creative content. By utilizing the smart routing feature, they can direct prompts to models like Claude, which excel in narrative and creative writing, thus producing captivating stories or engaging marketing content.

Translation Services

Businesses requiring translation services can benefit from LLMWise by routing their translation prompts to models like Gemini. This ensures high-quality translations that maintain the original meaning and tone, providing companies with reliable multilingual support.

Market Research

Market researchers can utilize LLMWise to analyze and synthesize large volumes of data. By comparing outputs from multiple models, researchers can gain diverse perspectives on market trends, consumer behavior, and competitive analysis, leading to more informed decision-making.

PoYo API

Content Creation & Marketing Automation

Marketing teams and content platforms can leverage PoYo API to automate the generation of high-quality visual and audio content at scale. This includes creating promotional images, social media videos, advertising jingles, and product demo scripts using models like Nano Banana Pro, Sora-2, and Suno AI. The API's concurrency and speed enable the rapid production of personalized content for campaigns, significantly reducing manual effort and time-to-market.

AI-Powered Application Development

Software development companies and indie developers can integrate state-of-the-art AI capabilities directly into their applications. This allows for building features such as in-app image editors, video generation tools, AI music composers, or intelligent chatbots powered by models like GPT-5 and Claude Sonnet, all through a single API integration, enhancing product value and user engagement without managing underlying AI infrastructure.

Research & Prototyping

Researchers, data scientists, and product teams can use the platform's free playground and flexible credits to rapidly prototype and test various AI models for specific use cases. They can compare outputs from different image, video, or music generation models to determine the best fit for their project's quality, style, and cost requirements before committing to full-scale integration and development.

Media & Entertainment Production

Studios and independent creators can utilize the advanced video and music APIs for storyboarding, generating visual effects, creating background scores, or even producing short-form content. Access to models like Veo3.1 and the complete Suno suite (for lyrics-to-song, vocal removal, etc.) provides professional-grade tools that can augment traditional production pipelines with AI-driven creativity.

Overview

About LLMWise

LLMWise is an innovative platform designed to streamline the use of large language models (LLMs) from various leading AI providers. By offering a single API, LLMWise empowers developers to access models from OpenAI, Anthropic, Google, Meta, xAI, and DeepSeek, among others, with intelligent routing capabilities. This means that developers no longer need to juggle multiple AI subscriptions or manage separate API keys for different tasks. Instead, LLMWise intelligently selects the most suitable model for each request, whether that be for coding, creative writing, or translation. The platform is tailored for developers who seek efficiency and effectiveness in their AI applications without the added complexity of managing multiple services. With features like smart routing, blending outputs, and circuit-breaker failover, LLMWise provides a robust and resilient solution that enhances productivity and optimizes costs. Its unique pricing structure allows users to pay only for what they use, making it a cost-effective choice for businesses and individuals alike.

About PoYo API

PoYo API is a sophisticated, centralized platform engineered to provide developers with streamlined, high-performance access to a vast and diverse ecosystem of over 500 premium artificial intelligence models. It functions as a singular, unified gateway for AI-powered generation across multiple modalities, including image, video, music, and conversational chat. Designed explicitly for developers, startups, and enterprises, PoYo API eliminates the traditional complexities of integrating disparate AI services by consolidating them under one intuitive interface and a single API key. Its core value proposition lies in delivering exceptional quality, remarkable speed with sub-50ms response times, and a transparent, cost-effective credit-based pricing model that charges only for actual usage without mandatory recurring subscriptions. By offering immediate access to cutting-edge models like Sora-2 for video, Nano Banana Pro for high-resolution imagery, and GPT-5 for chat, all backed by enterprise-grade security and 99.9% uptime, PoYo API empowers developers to build and scale next-generation AI applications with unprecedented efficiency and reliability.

Frequently Asked Questions

LLMWise FAQ

How does LLMWise ensure optimal model selection?

LLMWise employs a smart routing mechanism that analyzes each prompt and determines the most suitable model for the specific task, thereby enhancing the quality of responses.

Can I use my existing API keys with LLMWise?

Yes, LLMWise allows you to bring your own API keys, which means you can use your existing keys at provider prices or opt for pay-per-use with LLMWise credits, offering flexibility in billing.

What happens if an AI provider goes down?

LLMWise features a circuit-breaker failover system that automatically reroutes requests to backup models when a provider is unavailable, ensuring that your applications remain operational without interruption.

Is there a subscription fee for using LLMWise?

No, LLMWise operates on a pay-as-you-go model. Users pay only for what they use, starting from $0, and do not incur any monthly subscription fees, making it a cost-effective solution for accessing multiple models.

PoYo API FAQ

What is the PoYo API credit system and how does it work?

PoYo API uses a prepaid credit system where you purchase credits that are then consumed based on the AI model and task you execute. Each model has a defined credit cost per task (e.g., per image generation, per second of video). Credits do not expire, and you only pay for successful generations, with no charges for failed tasks. This system replaces traditional monthly subscriptions, offering greater flexibility and cost control.

How does PoYo API ensure low latency and handle high-volume requests?

The platform is engineered for performance, boasting an average response time of under 50ms and built to handle massive parallel requests. It utilizes an asynchronous API design for long-running tasks (like video generation) with webhook callback support, ensuring your application isn't blocked waiting for a response. This architecture, combined with robust backend infrastructure, allows for high concurrency and ultra-low latency suitable for scalable production applications.

Is there a way to test the AI models before purchasing credits?

Yes, PoYo API provides completely free playground access on each model's dedicated page. You can experiment with various generation parameters, test prompts, and evaluate the output quality of any model in the library without needing to sign up for a paid plan or provide credit card details. This allows for thorough testing and debugging before integrating the API into your application.

What security measures are in place to protect my API keys and data?

PoYo API employs enterprise-grade security practices, including a zero-knowledge architecture. Your API keys are encrypted using industry-standard protocols. The platform ensures that your credentials and data are protected, with full audit logging available for security monitoring and compliance purposes. This makes it a secure choice for developers handling sensitive information or building applications for regulated industries.

Alternatives

LLMWise Alternatives

LLMWise is a robust API platform designed for seamless access to various large language models (LLMs) including major players like OpenAI, Anthropic, Google, Meta, xAI, and DeepSeek. As a solution in the AI Assistants category, it aims to simplify the complexities of managing multiple AI providers by offering intelligent routing that matches prompts to the most suitable model. Users often seek alternatives to LLMWise for various reasons, including pricing structures, specific feature sets that may better align with their needs, or compatibility with existing platforms. When evaluating alternatives, it is essential to consider aspects such as ease of integration, performance across different tasks, flexibility in payment options, and the ability to customize features to enhance user experience.

PoYo API Alternatives

PoYo API is a centralized platform in the AI Assistants and generative AI category, providing developers with unified access to a vast library of over 500 premium models for image, video, music, and chat generation. It simplifies integration through a single API key and employs a flexible, consumption-based credit system. Developers may seek alternatives for various reasons, including specific budgetary constraints, the need for different pricing models like monthly subscriptions, or requirements for niche AI models not covered by a generalist platform. Others might prioritize direct relationships with model providers or have unique compliance and data residency needs that influence their platform choice. When evaluating alternatives, key considerations include the breadth and specialization of the available AI models, the overall cost structure and transparency, performance metrics like latency and uptime, and the robustness of security and support frameworks. The ideal platform aligns with both the technical demands of the application and the strategic goals of the development project.