LLMWise vs Prompt Builder

Side-by-side comparison to help you choose the right product.

LLMWise offers a single API to seamlessly access and compare top AI models like GPT, Claude, and Gemini with.

Last updated: February 26, 2026

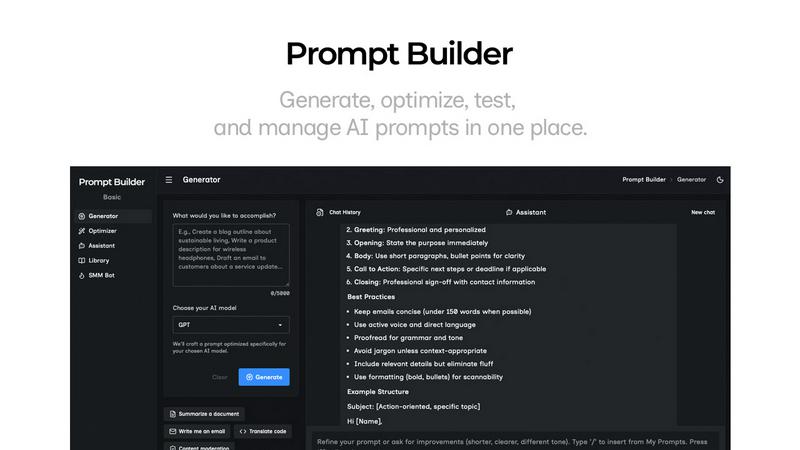

Prompt Builder

Prompt Builder is a unified platform to generate, optimize, test, and manage AI prompts for models like ChatGPT and Gemini.

Last updated: April 13, 2026

Visual Comparison

LLMWise

Prompt Builder

Feature Comparison

LLMWise

Smart Routing

Smart routing is a key feature of LLMWise that automatically selects the optimal model for each prompt. When a user submits a request, LLMWise intelligently directs it to the most appropriate AI model based on the task at hand, whether it is coding, creative writing, or translation. This ensures that users receive the best possible results without the need to manually choose which model to use for each individual task.

Compare & Blend

The Compare & Blend feature allows users to run prompts across different models side-by-side. This capability not only facilitates direct comparison of responses but also enables users to blend the strongest aspects of each output into a single, cohesive answer. This synthesis of information leads to richer, more nuanced results that can significantly enhance the quality of the final output.

Always Resilient

LLMWise includes an always-resilient architecture featuring a circuit-breaker failover mechanism. This ensures that if one provider goes down, the system can reroute requests to backup models seamlessly, preventing any disruption in service. As a result, applications utilizing LLMWise can maintain reliability and availability, even in the face of unexpected provider outages.

Test & Optimize

With built-in benchmarking suites and batch tests, LLMWise allows users to optimize their API usage based on speed, cost, or reliability. Developers can implement automated regression checks to ensure consistent performance over time. This feature supports continuous improvement and helps teams to fine-tune their AI integrations for maximum efficiency and effectiveness.

About Prompt Builder

Prompt Generator

The Prompt Generator is the foundational feature that transforms a simple idea into a robust, model-specific prompt. Users describe their intended task in natural language and select their target AI model (e.g., GPT-4, Claude 3, Gemini Pro). The system then crafts a detailed prompt draft tailored to the selected model's nuances and optimal prompting structures. This includes appropriate formatting, context setting, and constraint phrasing, providing a solid, professional-grade starting point that can be further refined in the integrated chat workspace, significantly reducing initial setup time.

Prompt Assistant & Chat Workspace

This integrated feature allows for seamless testing and iteration of prompts without ever leaving the Prompt Builder environment. The Prompt Assistant provides a chat-first interface where users can run their generated or optimized prompts. It includes a model selector to test outputs across different assistants like Grok, Gemini, or DeepSeek. Users can engage in follow-up conversations, make adjustments, and see results in real-time. All chat histories are preserved, enabling fast iterations and preventing the loss of successful prompt versions across disparate tools.

Prompt Optimizer

The Optimizer tool is designed to enhance existing prompts. Users can paste any prompt—whether from an external source or selected from their personal Library—and the Optimizer will analyze and rewrite it for improved clarity, specificity, and effectiveness. It provides structured improvements across key dimensions such as instruction clarity, added constraints, defined output formats, and inclusion of examples. Each optimization is saved in a history log, and optimized prompts can be instantly sent to the Prompt Assistant for testing with a single click.

Prompt Library & Community Templates

This feature offers a dual-purpose repository for prompt management and discovery. The "My Prompts" section allows users to save, pin, organize, and tag their best prompt versions for easy reuse across projects and teams. Simultaneously, the "Community Prompts" section provides a curated library of reusable prompts and templates shared by other users, searchable and filterable by category and model. This fosters knowledge sharing and provides immediate, proven starting points for common tasks, from creative writing to data analysis.

Use Cases

LLMWise

Software Development

In the realm of software development, LLMWise can be employed to streamline coding tasks. Developers can use the platform to send code-related prompts to the most capable models, such as GPT, ensuring that they receive accurate suggestions and code snippets that enhance productivity.

Creative Writing

Writers and content creators can leverage LLMWise for generating creative content. By utilizing the smart routing feature, they can direct prompts to models like Claude, which excel in narrative and creative writing, thus producing captivating stories or engaging marketing content.

Translation Services

Businesses requiring translation services can benefit from LLMWise by routing their translation prompts to models like Gemini. This ensures high-quality translations that maintain the original meaning and tone, providing companies with reliable multilingual support.

Market Research

Market researchers can utilize LLMWise to analyze and synthesize large volumes of data. By comparing outputs from multiple models, researchers can gain diverse perspectives on market trends, consumer behavior, and competitive analysis, leading to more informed decision-making.

Prompt Builder

Content Creation and Marketing

Marketing professionals and content creators can use Prompt Builder to rapidly generate and refine copy for blogs, social media posts, ad copy, and email campaigns. The SMM Bot feature specifically streamlines creating platform-ready content for X, LinkedIn, and Instagram by generating tailored posts with appropriate tones, hashtags, and calls-to-action. This ensures brand-consistent, engaging content is produced efficiently for multiple channels from a single brief.

Technical Development and Coding

Developers and engineers can leverage the platform to create precise prompts for code generation, debugging, documentation, and system design explanations. By generating prompts tuned for models like Claude or GPT-4, which are strong in reasoning, they can get more accurate, syntactically correct code snippets and detailed technical explanations, saving hours of manual coding and research time.

Research and Data Analysis

Academics, analysts, and students can utilize Prompt Builder to craft detailed prompts for summarizing complex research papers, extracting insights from datasets, generating literature reviews, or formulating hypotheses. The ability to optimize prompts for clarity and structure ensures the AI provides comprehensive, well-formatted, and relevant outputs, making the research process more systematic and productive.

Business Process Automation

Business professionals and operations teams can build a library of standardized prompts to automate routine tasks such as drafting reports, generating meeting agendas and minutes, creating standard operating procedures, or formatting data. This ensures consistency in output quality, reduces repetitive work, and allows team members to reuse and share effective prompt templates, scaling best practices across the organization.

Overview

About LLMWise

LLMWise is an innovative platform designed to streamline the use of large language models (LLMs) from various leading AI providers. By offering a single API, LLMWise empowers developers to access models from OpenAI, Anthropic, Google, Meta, xAI, and DeepSeek, among others, with intelligent routing capabilities. This means that developers no longer need to juggle multiple AI subscriptions or manage separate API keys for different tasks. Instead, LLMWise intelligently selects the most suitable model for each request, whether that be for coding, creative writing, or translation. The platform is tailored for developers who seek efficiency and effectiveness in their AI applications without the added complexity of managing multiple services. With features like smart routing, blending outputs, and circuit-breaker failover, LLMWise provides a robust and resilient solution that enhances productivity and optimizes costs. Its unique pricing structure allows users to pay only for what they use, making it a cost-effective choice for businesses and individuals alike.

About Prompt Builder

Prompt Builder is a sophisticated, all-in-one prompt engineering workspace designed to streamline the entire lifecycle of creating and deploying effective AI prompts. It serves as a centralized platform for writing, testing, optimizing, and managing prompts across a vast array of leading large language models (LLMs). The core value proposition lies in eliminating the inefficiencies of manual prompt crafting. Instead of spending hours rewriting and adapting instructions for different AI systems, users describe their task in plain English, select a target model, and Prompt Builder generates a professionally structured, model-optimized draft in seconds. This draft can then be interactively refined within a built-in chat interface, saved into a personal or community library, and executed directly within the platform. It is an indispensable tool for AI practitioners, content creators, developers, marketers, and any professional seeking to maximize the quality, consistency, and reusability of their AI interactions without being locked into a single model's ecosystem.

Frequently Asked Questions

LLMWise FAQ

How does LLMWise ensure optimal model selection?

LLMWise employs a smart routing mechanism that analyzes each prompt and determines the most suitable model for the specific task, thereby enhancing the quality of responses.

Can I use my existing API keys with LLMWise?

Yes, LLMWise allows you to bring your own API keys, which means you can use your existing keys at provider prices or opt for pay-per-use with LLMWise credits, offering flexibility in billing.

What happens if an AI provider goes down?

LLMWise features a circuit-breaker failover system that automatically reroutes requests to backup models when a provider is unavailable, ensuring that your applications remain operational without interruption.

Is there a subscription fee for using LLMWise?

No, LLMWise operates on a pay-as-you-go model. Users pay only for what they use, starting from $0, and do not incur any monthly subscription fees, making it a cost-effective solution for accessing multiple models.

Prompt Builder FAQ

What AI models does Prompt Builder support?

Prompt Builder supports a wide and growing range of leading large language models. This includes OpenAI's GPT series, Anthropic's Claude, Google's Gemini, Meta's Llama, Mistral AI's models, DeepSeek, xAI's Grok, Perplexity, and Cohere. The platform is designed to be model-agnostic, allowing you to generate prompts tailored for each specific model's strengths and expected input formats.

How does the free plan work?

The free plan offers users a generous starting point to explore the platform's core capabilities. It includes 25 assistant requests per month, allowing you to test and run prompts within the built-in Prompt Assistant using various models. This plan provides access to the Prompt Generator, Optimizer, and Library features, requiring no credit card to sign up, so you can evaluate the tool's effectiveness for your workflow at no cost.

Can I use prompts created in Prompt Builder outside of the platform?

Absolutely. Prompts created, optimized, and saved within your Prompt Builder Library are yours to use. You can easily copy any prompt and paste it directly into the native interface of your chosen AI model, such as the ChatGPT website, Claude.ai, or Google Gemini. The platform is designed to enhance prompt creation for use anywhere, while also providing the convenience of an integrated testing environment.

How does the Prompt Optimizer improve my existing prompts?

The Prompt Optimizer acts as an AI-powered editor for your prompts. It analyzes the provided text and applies structured enhancements focused on key areas: improving overall clarity and instruction specificity, adding necessary constraints to narrow the output, defining a clear format for the response (e.g., JSON, markdown, bullet points), and suggesting or incorporating examples to guide the model. This process transforms vague or generic prompts into precise, high-yield instructions.

Alternatives

LLMWise Alternatives

LLMWise is a robust API platform designed for seamless access to various large language models (LLMs) including major players like OpenAI, Anthropic, Google, Meta, xAI, and DeepSeek. As a solution in the AI Assistants category, it aims to simplify the complexities of managing multiple AI providers by offering intelligent routing that matches prompts to the most suitable model. Users often seek alternatives to LLMWise for various reasons, including pricing structures, specific feature sets that may better align with their needs, or compatibility with existing platforms. When evaluating alternatives, it is essential to consider aspects such as ease of integration, performance across different tasks, flexibility in payment options, and the ability to customize features to enhance user experience.

Prompt Builder Alternatives

Prompt Builder is a comprehensive AI prompt engineering workspace designed to streamline the process of creating, optimizing, and managing prompts for large language models. It falls into the category of AI assistants and productivity tools, specifically built for developers, content creators, and professionals who regularly interact with models like GPT, Claude, and Gemini. The platform allows users to transform a simple idea into a refined, executable prompt through an intuitive drafting, testing, and versioning system. Users may seek alternatives to Prompt Builder for various reasons, including budget constraints, specific feature requirements not covered by the platform, or a need for deeper integration within a particular ecosystem or workflow. Some may prioritize open-source solutions, while others might look for a tool with a different user interface or collaboration capabilities. The search often stems from a desire to find the optimal balance of cost, functionality, and ease of use for their unique projects. When evaluating alternatives, key considerations should include the range of supported AI models, the sophistication of prompt testing and optimization tools, and the ability to organize and reuse prompt libraries. Security and data privacy policies are critical for handling sensitive prompts, while collaboration features can be vital for team use. Ultimately, the best choice depends on aligning the tool's core strengths with the user's primary objective: efficiently producing high-quality, reliable outputs from AI models.